Want to jump straight to the code?

A car publishes its engine RPM ten times a second. A market data feed ticks on every quote. A cheap temperature sensor posts a fresh reading every two seconds, mostly identical to the last one. Data moves quickly, but most of it is noise: a publisher that doesn’t know what its subscribers care about, and a fleet of subscribers each writing the same defensive code to round, debounce, and ignore the boring readings.

Every team I’ve worked on has written some version of that subscriber, usually three or four times, usually subtly differently each time.

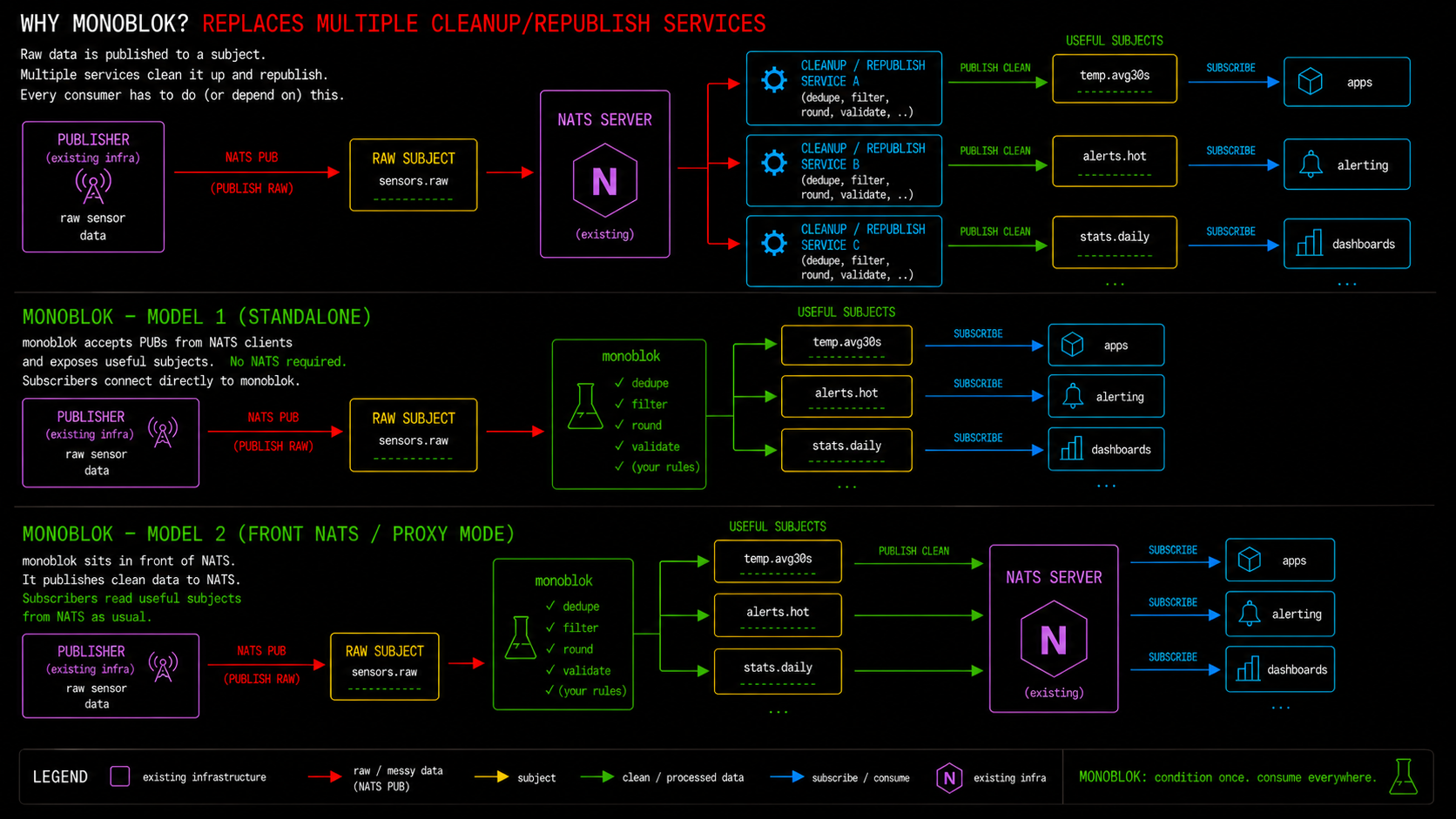

monoblok is a broker that does that work once, before a message reaches any subscriber. It sits between your publishers and your real message broker, and conditions the signal in flight: deadband, debounce, dedupe, demux JSON payloads into per-field subjects. The cleanup logic is stable, configured once, instead of being re-implemented in every subscriber.

The pattern: publishers PUB to monoblok instead of directly to NATS, using the exact same NATS client. No code changes. Monoblok does the conditioning, then forwards the tidy subjects to your real cluster. Subscribers get a stream that’s already correct.

This is useful for smoothing out output from jittery sensors (the £2.99 Temu kind), high-frequency market data, fleet telemetry, anything where the data moves fast but most of the movement isn’t worth a downstream message.

monoblok is partially NATS-compatible and is written in as simple as possible C, resulting in a fast and compact binary with low hardware requirements. It is configured through a simple signal conditioning DSL called patchbay.

Key features

The last-value cache (LVC). Every subject has an implicit cache of its most recent value. Subscribe to $LVC.foo.bar and you immediately receive the cached value (if any), then the live stream of subsequent publishes. Wildcards work too. It’s on by default and costs a couple of percent overhead.

Patchbay. A small S-expression DSL that runs at the broker, per message. You write rules of the shape (on SUBJECT-FILTER BODY) and the body gets evaluated when an incoming subject matches. The vocabulary borrows heavily from electronics: squelch to suppress duplicates, deadband to ignore small wobbles, quantize to snap to a grid, plus a family of O(1) windowed aggregates (moving-avg, moving-max and friends). Here’s the canonical example from the readme:

(on "sensors.*"

(-> payload-float

(round 1)

(squelch)

(publish-to (subject-append "stable"))))

Round to 1 decimal place, drop it if it hasn’t changed, republish to sensors.<whatever>.stable. That’s the lot. If the grammar feels unfamiliar, fear not: Claude Code handles it quite happily once pointed at the patchbay prompt further down.

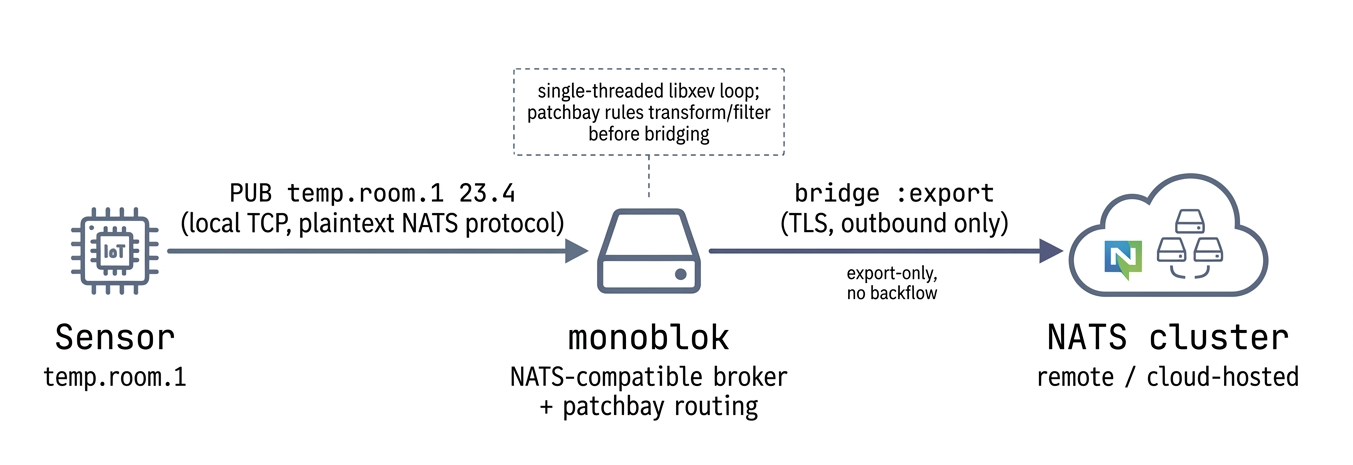

NATS bridge. An outbound bridge to a real NATS cluster ships in the default build. You nominate subject filters to export, and matching publishes are forwarded upstream while everything else stays local. The point is that an edge monoblok can do its conditioning work locally and only send the tidy, meaningful subjects on to a production NATS deployment. More on this further down.

Sensors publish to a local monoblok, patchbay rules do the conditioning, and only the tidy subjects of interest get trampolined out to a remote NATS cluster. The subjects remain in the local monoblok for local subscribers.

The pattern of using monoblok as a front-NATS for existing publishers is an elegant, light addition. What might have previously been handled by a consumer can now be done by monoblok. All consumers of the processed subjects are none the wiser, they just connect to the same production NATS environment. NATS is not actually necessary though: in smaller or more experimental setups, it is fine to simply point those consumers at monoblok instead.

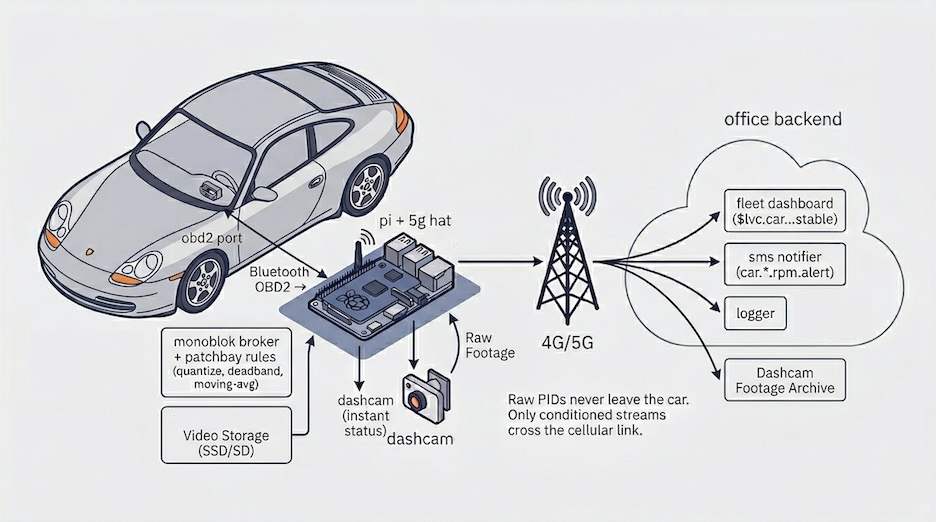

A worked example: catching over-revs at Peter’s Porsche Rentals

Peter runs a boutique rental outfit with a dozen 911s on the books. Customers pay a lot of money per day and in exchange may enjoy bouncing off the rev limiter for fun. Peter would like to know about that, ideally while the car is still out, so he can have a conversation at handover rather than discovering a trashed engine three services later.

A £10 Bluetooth OBD2 dongle plugged into the diagnostic port exposes a firehose of PIDs: RPM, coolant temperature, throttle position, short and long-term fuel trims, O2 sensor voltages, intake manifold pressure, the lot. A small Python script on a Raspberry Pi tucked behind the glovebox polls the dongle over RFCOMM and publishes each reading to car.<vin>.<pid>.

Because monoblok compiles for ARM, the broker itself runs on the same Pi. A 5G hat gives it an uplink, so conditioned streams go straight to Peter’s reporting system without ever shipping raw PIDs over the cellular link. Conditioning at the edge, analysis in the cloud. (For an even smaller edge, see tinyblok: same idea on an ESP32-C6.)

What Peter wants:

- A clean per-car telemetry stream for the fleet dashboard. RPM, coolant, speed, the usual.

- An over-rev alert the moment a car holds the engine above 7500rpm for more than a couple of seconds. One brief blip past redline on a downshift is forgivable; ten seconds in the limiter is a phone call.

- When he opens the dashboard in the morning, the current state of every car on hire, immediately. No “waiting for first reading” spinner.

- If the car has issues, he may want to provide pre-emptive assistance.

RPM updates many times a second and wobbles constantly at a steady throttle. Coolant barely moves once the engine is warm. Publishing all of this unconditioned over a metered 5G connection is wasteful. Conditioning the raw feed is exactly the sort of thing patchbay was built for.

Deadband, incidentally, is exactly what most consumer cars already do to their own gauges. The coolant temperature needle on a modern dash sits stubbornly in the middle across roughly 75-105°C of actual sensor reading, and only twitches if things get genuinely cold or genuinely hot. VW, Ford and others figured out a long time ago that a needle tracking the real value would have drivers ringing the dealership every time they sat in traffic on a warm day. Same primitive, different reason for wanting it.

;; RPM: quantize to 50rpm buckets, drop duplicates, republish

(on "car.*.rpm"

(-> payload-float

(quantize 50)

(squelch)

(publish-to (subject-append "stable"))))

;; Coolant temp: 1°C deadband is plenty

(on "car.*.coolant"

(-> payload-float

(round 0)

(deadband 1.0)

(publish-to (subject-append "stable"))))

;; Over-rev alert: fire once when the 20-sample moving average

;; crosses above 7500rpm.

(on "car.*.rpm"

(-> (> (moving-avg 20 payload-float) 7500.0)

(rising-edge)

(publish-to (subject-append "alert"))))

;; All-clear: fire once when the same moving average drops back below.

(on "car.*.rpm"

(-> (> (moving-avg 20 payload-float) 7500.0)

(falling-edge)

(publish-to (subject-append "ok"))))

The over-rev rule is the one Peter cares about. A single sample over 7500 gets averaged with the surrounding values and ignored; twenty samples in a row up there, and rising-edge fires exactly once on the crossing rather than spamming an alert every sample while the car sits in the limiter. The mirror rule with falling-edge emits an all-clear the moment the average drops back. A transition form collapses the two into one (same semantics, one shared prev slot, one ring buffer instead of two); the two-rule version is shown here because it makes the primitives visible. State is per rule per subject, so each car has its own independent ring buffer.

The interesting part is what crosses the 5G link. Raw PIDs at full rate would chew through a SIM’s data allowance for no good reason; most of it is redundant. Conditioning at the edge means the uplink only carries RPM when it moves into a new 50rpm bucket, coolant when it shifts by a degree, and over-rev alerts only when a customer is actually abusing the car. Everything else stays on the Pi. Peter’s backend subscribes to car.*.*.stable and car.*.*.alert and gets a tidy, low-volume feed it can log, graph or react to without having to do its own conditioning.

When Peter opens the fleet dashboard first thing, subscribing to $LVC.car.*.*.stable yields the last known value for every PID on every car without having to wait for the next change. If a logger process restarts, same deal. Useful if you’re trying to work out the state a car was in at the moment something went wrong.

Once the over-rev alert is sitting on a pub/sub subject rather than buried in a log file, it becomes a hook for anything else you want to hang off it. A small service subscribed to car.*.rpm.alert can push a notification to Peter’s phone the moment it fires. Another can look up the customer against the rental record and fire off a politely-worded text message reminding them that the car is leased, not theirs, and that the limiter exists for a reason.

Grabbing a dashcam still is time-sensitive: the moment you want captured is now, not thirty seconds later when the 5G link comes back after a tunnel or a patch of rural Wales with no signal. Therefore, that subscriber runs on the Pi itself, on the same broker, and pokes the dashcam over the local network the instant the alert lands on the subject. No uplink required. Because the alert payload carries the offending RPM reading, the subscriber can stamp it straight onto the image before saving: a JPEG with 8,420 RPM burned into the corner is a lot harder to argue with at handover than a log line. The notification and SMS services live back at the office and pick up the same alert whenever the 5G link is healthy again, because the broker buffers anything that couldn’t be delivered. Same subject, two very different latency and connectivity profiles, but with no extra plumbing.

These are just plain subscribers to a clean, meaningful stream. You can add or remove them without touching the car, the Pi or the patchbay rules.

Implementation notes

Everything besides networking runs in an event loop provided by the excellent libxev, so you get kqueue, io_uring, epoll or IOCP depending on where you run it. No threads or locks, zero-copy fan-out. It speaks enough of the NATS wire protocol that any NATS client can connect. This is rather convenient because it means you can drop it in alongside existing tooling without writing a client library first, or simply replace NATS as an experiment.

Sitting in front of a real NATS cluster

An outbound bridge to a real NATS cluster ships in the default build. Configuration is a single optional form in the patchbay file:

(bridge

:servers ("tls://connect.ngs.global:4222")

:creds "/etc/monoblok/ngs.creds"

:tls true

:name "monoblok-prod-1"

:export ("telemetry.>" "alerts.>"))

It’s export-only: publishes whose subject matches any :export filter are forwarded to the remote cluster, everything else stays local. Local subscribers are served before the forward, so edge consumers don’t wait on the uplink.

Back to Peter’s rental fleet: the Pi in each car keeps running its conditioning rules locally, and the bridge forwards only car.*.*.stable and car.*.*.alert up to the office NATS cluster. Raw PIDs still never cross the 5G link, but now the uplink target is a proper cluster with persistence and replication.

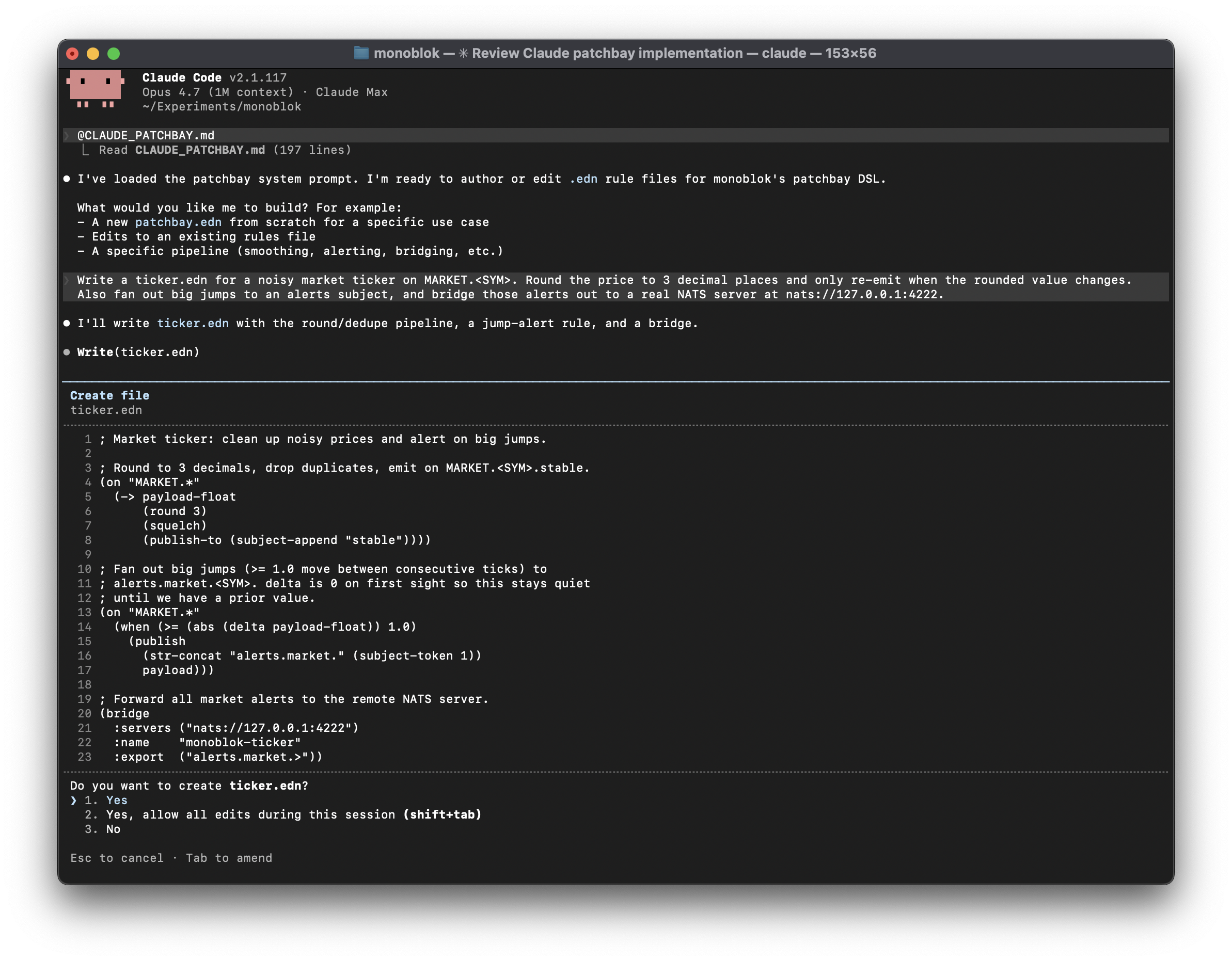

Using patchbay with Claude Code

Writing patchbay rules by hand is fine once you’ve internalised the primitives, but it’s much nicer to have Claude Code do it for you. There’s a self-contained system prompt in the repo, claude-patchbay.md, that teaches the model the grammar, the bound symbols, the idioms and the anti-patterns.

Append it to your project’s CLAUDE.md and Claude Code will pick it up whenever you’re editing rule files:

curl -fsSL https://raw.githubusercontent.com/lexvicacom/monoblok/main/docs/claude-patchbay.md >> ./CLAUDE.md

Or inline it into a one-off prompt with @claude-patchbay.md. Handy.

Benchmarks

Getting meaningful numbers turned out trickier than I expected. Single-row variance on a laptop is large, the bench client (nats CLI, itself a Go process) can be the bottleneck on some rows, and battery vs AC throttling alone roughly halves throughput on Apple Silicon (face palm). So no headline percentages here; run scripts/bench-with-nats-server.sh on your own hardware if numbers matter to you.

The shape of the comparison vs. nats-server is consistent across runs, though:

- nats-server wins on pure publish throughput on big boxes. Multi-threaded acceptance and a battle-hardened parse loop both help when there’s no fan-out to amortise the cost over and there are spare cores to spread it across. On small ARM VPSes the gap narrows or disappears: nats-server has less parallelism to exploit, and monoblok’s single-threaded design has no coordination overhead to pay.

- Roughly comparable at low fan-out (1-10 subscribers per publish).

- monoblok scales better with subscriber count. The single-threaded deduped-kicks fan-out avoids the per-subscriber lock work a multi-threaded server pays. Crossover sits somewhere around 10-30 subscribers; the further past that you go, the bigger the lead.

Worth keeping in mind: nats-server has a decade of production performance work behind it. Any monoblok win above should be read as the single-threaded design happens to fit this workload shape well, not monoblok is faster than nats. The right tool for most pub/sub deployments is still nats-server; monoblok is for the cases where the patchbay or LVC actually earn their place, and fast enough on a small box is a happy side-effect of the design rather than the headline.

Patchbay overhead scales with matching rules per PUB, not total rules in the file: pub-heavy workloads take a ~20% hit once a rule starts firing, fan-out workloads stay close to break-even.

Why this is all interesting

The conventional logic is “broker moves bytes, application does logic.” That’s fine and largely correct, but there’s a category of logic, signal conditioning, that you could argue belongs at the broker. It’s stateless from the application’s point of view, it’s the same boring code reimplemented in every consumer, and it benefits enormously from being applied once, centrally, before fan-out.

Putting a small DSL at the broker for this kind of work is a nice middle ground. It’s not trying to be Flink, Beam or Kafka Streams. It’s just a few primitives, declared once, that turn raw sensor noise into something useful before it ever leaves the broker. The LVC then makes late-joining subscribers a non-event, which is the other thing every realtime app ends up reinventing.

Swap office temperature sensors for market data ticks where you want to deadband out the noise and only emit on meaningful moves, fleet telemetry from a few thousand vehicles where most of the GPS jitter is uninteresting, IoT estates with flaky sensors that need smoothing before anyone trusts the readings, gaming or trading dashboards where late-joining clients shouldn’t have to wait for the next event to see current state. Same primitives, different domains.

There are many loose ends to tidy up: a TTL on last-value cache entries so stale state doesn’t linger forever or grow unbounded, maybe TLS (or just rely a NLB to terminate), proper structured logging, and a resilience story. The microcontroller direction has since turned into tinyblok, an ESP32-C6 running a codegen’d patchbay and publishing conditioned streams over Wi-Fi, swapping the Pi for something an order of magnitude cheaper and lower-power at the edge.

The code lives at github.com/lexvicacom/monoblok and there are both x86 and ARM Linux builds ready to go on the releases page if you want to skip the build step and give it a spin. There’s also a demo server anyone can try out with the NATS CLI, no install required.

If you’ve thoughts or want to chat about this sort of thing, give me a shout or find me on X or LinkedIn.

Patchbay photo by John Barkiple on Unsplash.